ParallelSum[expr,{i,imax}]

evaluates in parallel the sum ![]() .

.

ParallelSum[expr,{i,imin,imax}]

starts with i=imin.

ParallelSum[expr,{i,imin,imax,di}]

uses steps di.

ParallelSum[expr,{i,{i1,i2,…}}]

uses successive values i1, i2, ….

ParallelSum[expr,{i,imin,imax},{j,jmin,jmax},…]

evaluates in parallel the multiple sum ![]() .

.

ParallelSum

ParallelSum[expr,{i,imax}]

evaluates in parallel the sum ![]() .

.

ParallelSum[expr,{i,imin,imax}]

starts with i=imin.

ParallelSum[expr,{i,imin,imax,di}]

uses steps di.

ParallelSum[expr,{i,{i1,i2,…}}]

uses successive values i1, i2, ….

ParallelSum[expr,{i,imin,imax},{j,jmin,jmax},…]

evaluates in parallel the multiple sum ![]() .

.

Details and Options

- ParallelSum is a parallel version of Sum, which automatically distributes partial summations among different kernels and processors.

- ParallelSum will give the same results as Sum, except for side effects during the computation.

- Parallelize[Sum[expr,iter,…]] is equivalent to ParallelSum[expr,iter,…].

- If an instance of ParallelSum cannot be parallelized it is evaluated using Sum.

- The following options can be given:

-

Method Automatic granularity of parallelization DistributedContexts $DistributedContexts contexts used to distribute symbols to parallel computations ProgressReporting $ProgressReporting whether to report the progress of the computation - The Method option specifies the parallelization method to use. Possible settings include:

-

"CoarsestGrained" break the computation into as many pieces as there are available kernels "FinestGrained" break the computation into the smallest possible subunits "EvaluationsPerKernel"->e break the computation into at most e pieces per kernel "ItemsPerEvaluation"->m break the computation into evaluations of at most m subunits each Automatic compromise between overhead and load balancing - Method->"CoarsestGrained" is suitable for computations involving many subunits, all of which take the same amount of time. It minimizes overhead, but does not provide any load balancing.

- Method->"FinestGrained" is suitable for computations involving few subunits whose evaluations take different amounts of time. It leads to higher overhead, but maximizes load balancing.

- The DistributedContexts option specifies which symbols appearing in expr have their definitions automatically distributed to all available kernels before the computation.

- The default value is DistributedContexts:>$DistributedContexts with $DistributedContexts:=$Context, which distributes definitions of all symbols in the current context, but does not distribute definitions of symbols from packages.

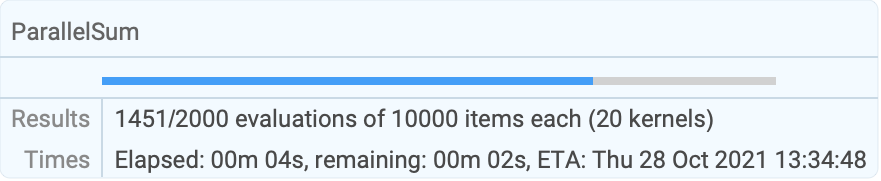

- The ProgressReporting option specifies whether to report the progress of the parallel computation.

- The default value is ProgressReporting:>$ProgressReporting.

Examples

open all close allBasic Examples (1)

Options (9)

Method (2)

DistributedContexts (5)

By default, definitions in the current context are distributed automatically:

Do not distribute any definitions of functions:

Distribute definitions for all symbols in all contexts appearing in a parallel computation:

Distribute only definitions in the given contexts:

Restore the value of the DistributedContexts option to its default:

ProgressReporting (2)

Do not show a temporary progress report:

Show a temporary progress report even if the default setting $ProgressReporting may be False:

| |

Possible Issues (2)

Sums with trivial terms may be slower in parallel than sequentially:

Splitting the computation into as few pieces as possible decreases the parallel overhead:

Sum may employ symbolic methods that are faster than an iterative addition of all terms:

See Also

Related Guides

History

Introduced in 2008 (7.0) | Updated in 2010 (8.0) ▪ 2021 (13.0)

Text

Wolfram Research (2008), ParallelSum, Wolfram Language function, https://reference.wolfram.com/language/ref/ParallelSum.html (updated 2021).

CMS

Wolfram Language. 2008. "ParallelSum." Wolfram Language & System Documentation Center. Wolfram Research. Last Modified 2021. https://reference.wolfram.com/language/ref/ParallelSum.html.

APA

Wolfram Language. (2008). ParallelSum. Wolfram Language & System Documentation Center. Retrieved from https://reference.wolfram.com/language/ref/ParallelSum.html

BibTeX

@misc{reference.wolfram_2025_parallelsum, author="Wolfram Research", title="{ParallelSum}", year="2021", howpublished="\url{https://reference.wolfram.com/language/ref/ParallelSum.html}", note=[Accessed: 06-May-2026]}

BibLaTeX

@online{reference.wolfram_2025_parallelsum, organization={Wolfram Research}, title={ParallelSum}, year={2021}, url={https://reference.wolfram.com/language/ref/ParallelSum.html}, note=[Accessed: 06-May-2026]}