SemidefiniteOptimization[f,cons,vars]

finds values of variables vars that minimize the linear objective f subject to semidefinite constraints cons.

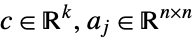

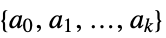

SemidefiniteOptimization[c,{a0,a1,…,ak}]

finds a vector ![]() that minimizes the quantity

that minimizes the quantity ![]() subject to the linear matrix inequality constraint

subject to the linear matrix inequality constraint ![]() .

.

SemidefiniteOptimization[…,"prop"]

specifies what solution property "prop" should be returned.

SemidefiniteOptimization

SemidefiniteOptimization[f,cons,vars]

finds values of variables vars that minimize the linear objective f subject to semidefinite constraints cons.

SemidefiniteOptimization[c,{a0,a1,…,ak}]

finds a vector ![]() that minimizes the quantity

that minimizes the quantity ![]() subject to the linear matrix inequality constraint

subject to the linear matrix inequality constraint ![]() .

.

SemidefiniteOptimization[…,"prop"]

specifies what solution property "prop" should be returned.

Details and Options

- SemidefiniteOptimization is also known as semidefinite programming (SDP) and mixed-integer semidefinite programming (MISDP).

- Semidefinite optimization is a convex optimization problem that can be solved globally and efficiently with real, integer or complex variables.

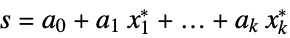

- Semidefinite optimization finds

that solves the primal problem:

that solves the primal problem: -

minimize

subject to constraints ![a_(0)+a_(1) x_(1)+...+a_(k) x_(k)>=_(TemplateBox[{n}, SemidefiniteConeList])0 a_(0)+a_(1) x_(1)+...+a_(k) x_(k)>=_(TemplateBox[{n}, SemidefiniteConeList])0](Files/SemidefiniteOptimization.en/6.png)

where

- The matrices

must be symmetric

must be symmetric  matrices.

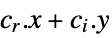

matrices. - Mixed-integer semidefinite optimization finds

and

and  that solve the problem:

that solve the problem: -

minimize

subject to constraints ![a_(0)+a_(1) x_(1)+...+a_(k_r) x_(k_r)+a_(k_r+1)y_1+...+a_(k_r+k_i)y_(k_i)>=_(TemplateBox[{n}, SemidefiniteConeList])0 a_(0)+a_(1) x_(1)+...+a_(k_r) x_(k_r)+a_(k_r+1)y_1+...+a_(k_r+k_i)y_(k_i)>=_(TemplateBox[{n}, SemidefiniteConeList])0](Files/SemidefiniteOptimization.en/13.png)

where

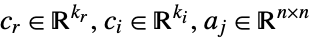

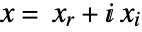

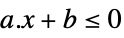

- When the objective function is real valued, SemidefiniteOptimization solves problems with

![x in TemplateBox[{}, Complexes]^n x in TemplateBox[{}, Complexes]^n](Files/SemidefiniteOptimization.en/15.png) by internally converting to real variables

by internally converting to real variables  , where

, where  and

and  . Linear matrix inequalities may be specified with Hermitian matrices

. Linear matrix inequalities may be specified with Hermitian matrices  .

. - The variable specification vars should be a list with elements giving variables in one of the following forms:

-

v variable with name  and dimensions inferred

and dimensions inferredv∈Reals real scalar variable v∈Integers integer scalar variable v∈Complexes complex scalar variable v∈ℛ vector variable restricted to the geometric region

v∈Vectors[n,dom] vector variable in  or

or

v∈Matrices[{m,n},dom] matrix variable in  or

or

- The constraints cons can be specified by:

-

LessEqual

scalar inequality GreaterEqual

scalar inequality VectorLessEqual

vector inequality VectorGreaterEqual

vector inequality Equal

scalar or vector equality Element

convex domain or region element - With SemidefiniteOptimization[f,cons,vars], parameter equations of the form parval, where par is not in vars and val is numerical or an array with numerical values, may be included in the constraints to define parameters used in f or cons. »

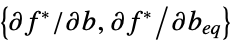

- The primal minimization problem has a related maximization problem that is the Lagrangian dual problem. The dual maximum value is always less than or equal to the primal minimum value, so it provides a lower bound. The dual maximizer provides information about the primal problem, including sensitivity of the minimum value to changes in the constraints. »

- The semidefinite optimization has a dual: »

-

maximize ![-TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {2ColumnTableMod}][a_0.lambda] -TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {2ColumnTableMod}][a_0.lambda]](Files/SemidefiniteOptimization.en/33.png)

subject to constraints ![TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {2ColumnTableMod}][a_j.lambda]=c_j,j=1,..., k,lambda>=_(TemplateBox[{n}, SemidefiniteConeList])0 TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {2ColumnTableMod}][a_j.lambda]=c_j,j=1,..., k,lambda>=_(TemplateBox[{n}, SemidefiniteConeList])0](Files/SemidefiniteOptimization.en/34.png)

where

- The possible solution properties "prop" include:

-

"PrimalMinimizer"

a list of variable values that minimizes the objective function "PrimalMinimizerRules"

values for the variables vars={v1,…} that minimize

"PrimalMinimizerVector"

the vector that minimizes

"PrimalMinimumValue"

the primal minimum value

"DualMaximizer"

the matrix that maximizes ![-TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {3ColumnTableMod}][a_0.lambda] -TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {3ColumnTableMod}][a_0.lambda]](Files/SemidefiniteOptimization.en/44.png)

"DualMaximumValue" ![-TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {3ColumnTableMod}][a_0.lambda^*] -TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {3ColumnTableMod}][a_0.lambda^*]](Files/SemidefiniteOptimization.en/45.png)

the dual maximum value "DualityGap" ![-TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {3ColumnTableMod}][a_0.lambda^*] - c.x^* -TemplateBox[{Tr, paclet:ref/Tr}, RefLink, BaseStyle -> {3ColumnTableMod}][a_0.lambda^*] - c.x^*](Files/SemidefiniteOptimization.en/46.png)

the difference between the dual and primal optimal values "Slack"

matrix that converts inequality constraints to equality "ConstraintSensitivity"

sensitivity of  to constraint perturbations

to constraint perturbations"ObjectiveVector"

the linear objective vector "ConstraintMatrices"

the list of constraint matrices {"prop1","prop2",…} several solution properties - The following options may be given:

-

MaxIterations Automatic maximum number of iterations to use Method Automatic the method to use PerformanceGoal $PerformanceGoal aspects of performance to try to optimize Tolerance Automatic the tolerance to use for internal comparisons - The option Method->method may be used to specify the method to use. Available methods include:

-

Automatic choose the method automatically "CSDP" CSDP semidefinite optimization solver "DSDP" DSDP semidefinite optimization solver "SCS" SCS splitting conic solver "MOSEK" Commercial MOSEK convex optimization solver - Computations are limited to MachinePrecision.

Examples

open all close allBasic Examples (3)

Minimize ![]() subject to the linear matrix inequality constraint

subject to the linear matrix inequality constraint ![]() :

:

The optimal point is where ![]() is smallest within the region defined by the constraints:

is smallest within the region defined by the constraints:

Minimize ![]() subject to the linear matrix inequality constraint

subject to the linear matrix inequality constraint ![]() :

:

Use the equivalent formulation with the objective vector and constraint matrices:

Use the equivalent formulation with the objective vector and constraint matrices:

Scope (29)

Basic Uses (13)

Minimize ![]() subject to constraints

subject to constraints ![]() :

:

Minimize ![]() when the matrix

when the matrix ![]() is positive semidefinite:

is positive semidefinite:

Minimize ![]() subject to the linear matrix inequality constraint

subject to the linear matrix inequality constraint ![]() :

:

The left-hand side of the constraint can be given in evaluated form:

Express the problem with the objective vector and constraint matrices:

Use a vector variable ![]() and Inactive[Plus] to avoid unintended threading:

and Inactive[Plus] to avoid unintended threading:

Use a vector variable ![]() and parameter equations to avoid unintended threading:

and parameter equations to avoid unintended threading:

Use a vector variable ![]() and Indexed[x,i] to specify individual components:

and Indexed[x,i] to specify individual components:

Use Vectors[n] to specify the dimension of a vector variable when it is ambiguous:

Several linear inequality constraints can be expressed with VectorGreaterEqual:

Use ![]() v>=

v>=![]() or \[VectorGreaterEqual] to enter the vector inequality sign :

or \[VectorGreaterEqual] to enter the vector inequality sign :

An equivalent form using scalar inequalities:

Use a vector variable ![]() and vector inequality:

and vector inequality:

Specify non-negative constraints using NonNegativeReals (![]() ):

):

An equivalent form using vector inequalities:

Integer Variables (4)

Specify integer domain constraints using Integers:

Specify integer domain constraints on vector variables using Vectors[n,Integers]:

Specify non-negative integer domain constraints using NonNegativeIntegers (![]() ):

):

Specify non-positive integer domain constraints using NonPositiveIntegers (![]() ):

):

Complex Variables (2)

Specify complex variables using Complexes:

In linear matrix inequalities, the constraint matrices can be Hermitian or real symmetric:

The variables in linear matrix inequalities need to be real for the sum to remain Hermitian:

Primal Model Properties (3)

Minimize the function ![]() subject to the constraint

subject to the constraint ![]() :

:

Get the primal minimizer as a vector:

Extract the constraint matrices:

Use the extracted objective vector and constraint matrices for direct input:

The slack for an inequality ![]() at the minimizer

at the minimizer ![]() is given by

is given by ![]() :

:

Dual Model Properties (3)

The dual problem is to maximize ![]() subject to

subject to ![]() :

:

The primal minimum value and the dual maximum value coincide because of strong duality:

That is the same as having a duality gap of zero. In general, ![]() at optimal points:

at optimal points:

Construct the dual problem using constraint matrices extracted from the primal problem:

Extract the objective vector and constraint matrices:

The dual problem is to maximize ![]() subject to

subject to ![]() :

:

Get the dual maximum value and dual maximizer directly using solution properties:

Sensitivity Properties (4)

Use "ConstraintSensitivity" to find the change in optimal value due to constraint perturbations:

Consider new constraints ![]() where

where ![]() is the perturbation:

is the perturbation:

The approximate new optimal value is:

Compare to directly solving the perturbed problem:

The optimal value changes according to the signs of the sensitivity matrix elements:

At negative sensitivity element position, a positive perturbation will decrease the optimal value:

At positive sensitivity element position, a positive perturbation will increase the optimal value:

Express the perturbed constraints symbolically using Sylvester's criterion for semidefiniteness:

With this form, the minimum value can be found exactly as a function of the parameters around 0:

Make a symmetric matrix with the derivatives of the minimum with respect to the parameters:

This is the sensitivity to perturbation given by the "ConstraintSensitivity" property:

The constraint sensitivity can also be obtained as the negative of the dual maximizer:

Options (8)

Method (5)

The default method "CSDP" is an interior point method:

"DSDP" is an alternative interior point method:

"SCS" uses a splitting conic solver method:

Different methods have different default tolerances, which affects the accuracy and precision:

Compute exact and approximate solutions:

"CSDP" and "DSDP" have default tolerances of ![]() :

:

"SCS" has a default tolerance of ![]() :

:

When the default method "CSDP" produces a message, try "DSDP" first:

In this case, "DSDP" succeeds in finding a good solution:

"SCS" with a default tolerance of 10-3 is an alternative method to try:

The quality of the result with "SCS" can often be improved with a smaller Tolerance:

PerformanceGoal (1)

The default value of the option PerformanceGoal is $PerformanceGoal:

Use PerformanceGoal"Quality" to get a more accurate result:

Use PerformanceGoal"Speed" to get a result faster, but at the cost of quality:

Tolerance (2)

A smaller Tolerance setting gives a more precise result:

Compute the exact minimum value with Minimize:

Compute the error in the minimum value with different Tolerance settings:

Visualize the change in minimum value error with respect to tolerance:

A smaller Tolerance setting gives a more precise result, but may take longer to compute:

Applications (33)

Basic Modeling Transformations (13)

Maximize ![]() subject to

subject to ![]() . Solve a maximization problem by negating the objective function:

. Solve a maximization problem by negating the objective function:

Negate the primal minimum value to get the corresponding maximal value:

Find ![]() that minimizes the largest eigenvalue of a symmetric matrix that depends linearly on the decision variables

that minimizes the largest eigenvalue of a symmetric matrix that depends linearly on the decision variables ![]() ,

, ![]() . The problem can be formulated as linear matrix inequality, since

. The problem can be formulated as linear matrix inequality, since ![]() is equivalent to

is equivalent to ![]() where

where ![]() is the

is the ![]()

![]() eigenvalue of

eigenvalue of ![]() . Define the linear matrix function

. Define the linear matrix function ![]() :

:

A real symmetric matrix ![]() can be diagonalized with an orthogonal matrix

can be diagonalized with an orthogonal matrix ![]() so

so ![]() . Hence

. Hence ![]() iff

iff ![]() . Since any

. Since any ![]() , taking

, taking ![]() ,

, ![]() , hence

, hence ![]() iff

iff ![]() . Numerically simulate to show that these formulations are equivalent:

. Numerically simulate to show that these formulations are equivalent:

Run a Monte Carlo simulation to check the plausibility of the result:

Find ![]() that maximizes the smallest eigenvalue of a symmetric matrix

that maximizes the smallest eigenvalue of a symmetric matrix ![]() that depends linearly on the decision variables

that depends linearly on the decision variables ![]() . Define the linear matrix function

. Define the linear matrix function ![]() :

:

The problem can be formulated as linear matrix inequality, since ![]() is equivalent to

is equivalent to ![]() where

where ![]() is the

is the ![]()

![]() eigenvalue of

eigenvalue of ![]() . To maximize

. To maximize ![]() , minimize

, minimize ![]() :

:

Run a Monte Carlo simulation to check the plausibility of the result:

Find ![]() that minimizes the difference between the largest and the smallest eigenvalues of a symmetric matrix

that minimizes the difference between the largest and the smallest eigenvalues of a symmetric matrix ![]() that depends linearly on the decision variables

that depends linearly on the decision variables ![]() . Define the linear matrix function

. Define the linear matrix function ![]() :

:

The problem can be formulated as linear matrix inequality, since ![]() is equivalent to

is equivalent to ![]() where

where ![]() is the

is the ![]()

![]() eigenvalue of

eigenvalue of ![]() . Solve the resulting problem:

. Solve the resulting problem:

In this case, the minimum and maximum eigenvalues coincide and the difference is 0:

Minimize the largest by absolute value eigenvalue of a linear in ![]() symmetric matrix

symmetric matrix ![]() :

:

The largest eigenvalue satisfies ![]() The largest by absolute value negative eigenvalue of

The largest by absolute value negative eigenvalue of ![]() is the largest eigenvalue of

is the largest eigenvalue of ![]() and satisfies

and satisfies ![]() :

:

Find ![]() that minimizes the largest singular value

that minimizes the largest singular value ![]() of a linear in

of a linear in ![]() matrix

matrix ![]() :

:

The largest singular value ![]() of

of ![]() is the square root of the largest eigenvalue of

is the square root of the largest eigenvalue of ![]() and from a preceding example it satisfies

and from a preceding example it satisfies ![]() or equivalently

or equivalently ![]() :

:

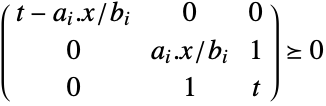

Minimize ![]() . Using an auxiliary variable

. Using an auxiliary variable ![]() , transform the problem to minimize

, transform the problem to minimize ![]() such that

such that ![]() . This is the same as

. This is the same as ![]() :

:

A Schur complement condition says that if ![]() , a block matrix

, a block matrix ![]() iff

iff ![]() . Thus

. Thus ![]() ⧦

⧦ ![]() for

for ![]() and for

and for ![]() , since then

, since then ![]() must be 0:

must be 0:

Use the constraint ![]() directly and it will automatically convert into semidefinite form:

directly and it will automatically convert into semidefinite form:

Minimize ![]() subject to

subject to ![]() , assuming

, assuming ![]() when

when ![]() . Using the auxiliary variable

. Using the auxiliary variable ![]() , the objective is to minimize

, the objective is to minimize ![]() such that

such that ![]() :

:

Using the Schur complement condition, ![]() iff

iff ![]() . Use Inactive[Plus] for constructing the constraints to avoid threading:

. Use Inactive[Plus] for constructing the constraints to avoid threading:

For quadratic sets ![]() , which include ellipsoids, quadratic cones and paraboloids, determine whether

, which include ellipsoids, quadratic cones and paraboloids, determine whether ![]() , where

, where ![]() are symmetric matrices,

are symmetric matrices, ![]() are vectors and

are vectors and ![]() scalars:

scalars:

Assuming that the sets ![]() are full dimensional, the S-procedure says that

are full dimensional, the S-procedure says that ![]() iff there exists some non-negative number

iff there exists some non-negative number ![]() such that

such that ![]() Visually see that there exists a non-negative

Visually see that there exists a non-negative ![]() :

:

Minimize ![]() subject to

subject to ![]() . Convert the objective

. Convert the objective![]() into a linear function

into a linear function ![]() with the additional constraint

with the additional constraint ![]() , which is equivalent to

, which is equivalent to ![]() :

:

Minimize ![]() subject to

subject to ![]() . Convert the objective into a linear function using

. Convert the objective into a linear function using ![]() and the additional constraints

and the additional constraints ![]() :

:

Minimize ![]() subject to

subject to ![]() . Convert the objective into a linear function

. Convert the objective into a linear function ![]() with the additional constraint

with the additional constraint ![]() , which is equivalent to

, which is equivalent to ![]() :

:

Minimize ![]() subject to

subject to ![]() , where

, where ![]() is a nondecreasing function, by instead minimizing

is a nondecreasing function, by instead minimizing ![]() . The primal minimizer

. The primal minimizer ![]() will remain the same for both problems. Consider minimizing

will remain the same for both problems. Consider minimizing ![]() , subject to

, subject to ![]() :

:

The true minimum value can be obtained by applying ![]() to the minimum value of

to the minimum value of ![]() :

:

Data-Fitting Problems (5)

Find the coefficients ![]() of a fifth-order polynomial by minimizing

of a fifth-order polynomial by minimizing ![]() that fits a discrete dataset:

that fits a discrete dataset:

Select the polynomial bases and construct the input matrix using DesignMatrix and output vector:

Using an auxiliary variable ![]() , the objective is transformed to minimize

, the objective is transformed to minimize ![]() such that

such that ![]() , which is equivalent to

, which is equivalent to ![]() as shown under Basic Modeling Transformations:

as shown under Basic Modeling Transformations:

Find an approximating function to discrete data that varies on a logarithmic scale by minimizing ![]() using Chebyshev bases:

using Chebyshev bases:

Select Chebyshev basis functions and compute their values at the random data points:

Since the data is on a logarithmic scale, direct data-fitting is not ideal. Instead, transform the problem to minimize ![]() . Using auxiliary variable

. Using auxiliary variable ![]() , minimize

, minimize ![]() such that

such that ![]() . This constraint is equivalent to

. This constraint is equivalent to  :

:

Find the coefficients of the approximating function:

The data-fitting can also be obtained directly using the function Fit. However, without the log transformation, there are significant oscillations in the approximating function:

Represent a given bivariate polynomial ![]() in terms of sum-of-squares polynomial

in terms of sum-of-squares polynomial ![]() :

:

The objective is to find ![]() such that

such that ![]() , where

, where ![]() is a vector of monomials:

is a vector of monomials:

Construct the symmetric matrix ![]() :

:

Find the polynomial coefficients of ![]() and

and ![]() and make sure they are equal:

and make sure they are equal:

The quadratic term ![]() , where

, where ![]() is a lower-triangular matrix obtained from the Cholesky decomposition of

is a lower-triangular matrix obtained from the Cholesky decomposition of ![]() :

:

Compare the sum-of-squares polynomial to the given polynomial:

Cardinality constrained least squares: minimize ![]() such that

such that ![]() has at most

has at most ![]() nonzero elements:

nonzero elements:

Let ![]() be a decision vector such that if

be a decision vector such that if ![]() , then

, then ![]() is nonzero. The decision constraints are:

is nonzero. The decision constraints are:

To model constraint ![]() when

when ![]() , chose a large constant

, chose a large constant ![]() such that

such that ![]() :

:

Using an auxiliary variable ![]() , the objective is transformed to minimize

, the objective is transformed to minimize ![]() such that

such that ![]() , which is equivalent to

, which is equivalent to ![]() :

:

Solve the cardinality constrained least-squares problem:

The subset selection can also be done more efficiently with Fit using ![]() regularization. First, find the range of regularization parameters that uses at most

regularization. First, find the range of regularization parameters that uses at most ![]() basis functions:

basis functions:

Find the nonzero terms in the ![]() regularized fit:

regularized fit:

Find the fit with just these basis terms:

Find the best subset of functions from a candidate set of functions to approximate given data:

The approximating function will be ![]() :

:

A maximum of 5 basis functions are to be used in the final approximation:

The coefficients associated with functions that are not chosen must be zero:

Geometry Problems (6)

Find the smallest disk centered at ![]() of radius

of radius ![]() that encloses a set of points:

that encloses a set of points:

For each point ![]() , the constraint

, the constraint ![]() must be satisfied. This constraint is equivalent to

must be satisfied. This constraint is equivalent to ![]() . Use Inactive when forming the constraints:

. Use Inactive when forming the constraints:

Find the enclosing disk by minimizing the radius ![]() :

:

Visualize the enclosing region:

The minimal area bounding disk can also be found using BoundingRegion:

Find the smallest ellipse parametrized as ![]() that encompasses a set of points by minimizing the area:

that encompasses a set of points by minimizing the area:

For each point ![]() , the constraint

, the constraint ![]() must be satisfied:

must be satisfied:

The area is proportional to ![]() . Applying the monotone function Log, the function to minimize is

. Applying the monotone function Log, the function to minimize is ![]() . This in turn is equivalent to minimizing

. This in turn is equivalent to minimizing ![]() :

:

Convert the parameterized ellipse into the explicit form ![]() :

:

A bounding ellipse, not necessarily minimal area, can be found using BoundingRegion:

The optimal ellipse has a smaller area:

Find the smallest ellipsoid parametrized as ![]() that encompasses a set of points in 3D by minimizing the volume:

that encompasses a set of points in 3D by minimizing the volume:

For each point ![]() , the constraint

, the constraint ![]() must be satisfied:

must be satisfied:

Minimizing the volume is equivalent to minimizing ![]() , which is equivalent to minimizing

, which is equivalent to minimizing ![]() :

:

Convert the parameterized ellipse into the explicit form ![]() :

:

A bounding ellipsoid, not necessarily minimum volume, can also be found using BoundingRegion:

Find the maximum area ellipse parametrized as ![]() that can be fitted into a convex polygon:

that can be fitted into a convex polygon:

Each segment of the convex polygon can be represented as intersections of half-planes ![]() :

:

Applying the parametrization to the half-planes gives ![]() . The term

. The term ![]() . Thus, the constraints are

. Thus, the constraints are ![]() , which is equivalent to

, which is equivalent to ![]() :

:

Minimizing the area is equivalent to minimizing ![]() , which is equivalent to minimizing

, which is equivalent to minimizing ![]() :

:

Convert the parameterized ellipse into the explicit form as ![]() :

:

Find the center ![]() and radius

and radius ![]() of a disk given by

of a disk given by ![]() that encloses three ellipses of the form

that encloses three ellipses of the form ![]() :

:

Using S-procedure, it can be shown that the disk contains the ellipses iff ![]() :

:

The goal is to minimize the radius ![]() given by

given by ![]() . Using auxiliary variable

. Using auxiliary variable ![]() , the objective is to minimize

, the objective is to minimize ![]() such that

such that ![]() , which can be written as

, which can be written as ![]() :

:

Find the center and radius of the disk:

Convert the quadratic form of the ellipse to the explicit form ![]() :

:

Test whether an ellipsoid is a subset of another ellipsoid of the form ![]() :

:

Using S-procedure, it can be shown that ellipse 2 is a subset of ellipse 1 iff ![]() :

:

Check if the condition is satisfied:

Convert the ellipsoids into explicit form and confirm that ellipse 2 is within ellipse 1:

Move ellipsoid 2 such that it overlaps with ellipsoid 1:

A test now shows that the problem is infeasible, indicating that ellipsoid 2 is not a subset of ellipsoid 1:

Classification Problems (2)

Find an ellipse that separates two groups of points ![]() and

and ![]() :

:

For separation, set 1 must satisfy ![]() and set 2 must satisfy

and set 2 must satisfy ![]() :

:

Find the coefficients of the separating ellipsoid:

Find an ellipse that is as close as possible to a circle that separates two groups of points ![]() and

and ![]() :

:

For separation, set 1 must satisfy ![]() and set 2 must satisfy

and set 2 must satisfy ![]() :

:

For the ellipsoid to be as close as possible to a circle, the constraint ![]() :

:

Find the coefficients of the separating ellipsoid by minimizing ![]() :

:

Graph Problems (3)

The Lovász number, computable using semidefinite optimization, is used as a bound for hard compute graph invariants:

The Lovász number is an upper bound for the Shannon capacity of a graph:

According to the Lovász sandwich theorem:

The Lovász number ![]() for a graph

for a graph ![]() is given by

is given by ![]() , where

, where ![]() ,

, ![]() and

and ![]() for

for ![]() . It can be written in a dual semidefinite form:

. It can be written in a dual semidefinite form: ![]() ,

, ![]()

![]() subject to

subject to ![]() and

and ![]()

![]() and 0 elsewhere:

and 0 elsewhere:

Compare to the exact Lovász number values from GraphData:

Find the approximate result for ![]() when the exact result is not available:

when the exact result is not available:

The max-cut problem determines a subset ![]() of the vertices

of the vertices ![]() of a graph, for which the sum of the weights

of a graph, for which the sum of the weights ![]() of the edges that cross from

of the edges that cross from ![]() to its complement

to its complement ![]() is maximized. Let

is maximized. Let ![]() for

for ![]() and

and ![]() for

for ![]() . Maximize

. Maximize ![]() , where

, where ![]() and

and ![]() is the Laplacian matrix of the graph:

is the Laplacian matrix of the graph:

For smaller cases, the max-cut problem can be solved exactly, but this is impractical for larger graphs since in general the problem has NP-complete complexity:

The problem minimizes ![]() , where

, where ![]() is a symmetric rank-1 positive semidefinite matrix, with

is a symmetric rank-1 positive semidefinite matrix, with ![]() for each

for each ![]() , equivalent to

, equivalent to ![]() , where

, where ![]() is the matrix with

is the matrix with ![]() at the

at the ![]() diagonal position and 0 everywhere else. To make the solution practical, solve a relaxed problem where the rank-1 condition is eliminated. For such

diagonal position and 0 everywhere else. To make the solution practical, solve a relaxed problem where the rank-1 condition is eliminated. For such ![]() , a cut is constructed by randomized rounding: decompose

, a cut is constructed by randomized rounding: decompose ![]() , let

, let ![]() be a uniformly distributed random vector of the unit norm and let

be a uniformly distributed random vector of the unit norm and let ![]() . For demonstration, a function is defined that shows the relaxed value, the rounded value and the graph with the vertices in

. For demonstration, a function is defined that shows the relaxed value, the rounded value and the graph with the vertices in ![]() shown as red:

shown as red:

Find an approximate max cut using the previously shown procedure, and compare with the exact result:

Find the max cut for a grid graph:

Find the max cut for a random graph:

Compare timings for the relaxed and exact algorithms for a Peterson graph:

Find a subset ![]() of specified graph with vertices

of specified graph with vertices ![]() such that the sum of the weights

such that the sum of the weights ![]() of the edges that cross from

of the edges that cross from ![]() to its complement

to its complement ![]() is maximized. Specify the graph:

is maximized. Specify the graph:

The objective is to maximize ![]() , where

, where ![]() is a symmetric rank-1 positive semidefinite matrix and

is a symmetric rank-1 positive semidefinite matrix and ![]() is the Laplacian matrix of the graph:

is the Laplacian matrix of the graph:

Drop the rank-1 matrix assumption and solve the resulting max-cut problem:

Control & Dynamic Systems Problems (3)

Show that a linear dynamical system ![]() will be asymptotically stable for any initial condition. The system is said to be stable iff there exists a positive definite matrix

will be asymptotically stable for any initial condition. The system is said to be stable iff there exists a positive definite matrix ![]() such that

such that ![]() where

where ![]() is called the Lyapunov function:

is called the Lyapunov function:

Differentiating the Lyapunov function ![]() gives

gives ![]() . Therefore, the stability conditions are

. Therefore, the stability conditions are ![]() :

:

The eigenvalues of ![]() are all negative, making the matrix negative definite, which proves stability:

are all negative, making the matrix negative definite, which proves stability:

Since the analytic solution to the system is ![]() , numerically verify that any system will go to zero for any initial condition. Take

, numerically verify that any system will go to zero for any initial condition. Take ![]() for this simulation:

for this simulation:

Find a controller ![]() , such that the closed-loop system

, such that the closed-loop system ![]() is stable:

is stable:

Using Lyapunov stability theorem, the objective is to find a matrices ![]() such that the stability constraints

such that the stability constraints ![]() are satisfied. Letting

are satisfied. Letting ![]() , the first constraint becomes a proper semidefinite constraint

, the first constraint becomes a proper semidefinite constraint ![]() :

:

The control matrix can be computed as ![]() :

:

The closed-loop system is stable if the real parts of the eigenvalues of ![]() are negative:

are negative:

Perform a numerical run to see that the system is stable:

Find a Lyapunov function of the dynamical system ![]() :

:

The objective is to find ![]() such that

such that ![]() , where

, where ![]() is a vector of monomials:

is a vector of monomials:

Match the coefficients such that they are all positive for ![]() and negative for

and negative for ![]() :

:

The Lyapunov function is given by:

Visualize the Lyapunov function. The minimal location of the function matches with the location of the attractor:

Structural Optimization Problems (1)

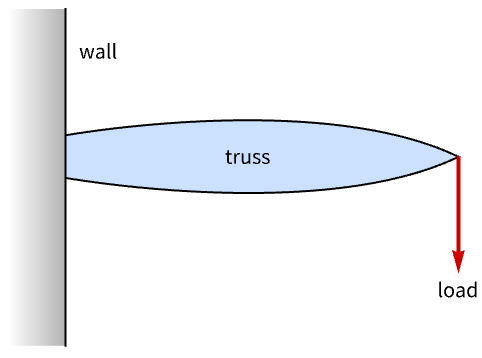

Design a minimum-weight truss that is anchored on one end of the wall and must withstand a load on the other end:

The truss can be modeled using links and nodes. Each node is connected to a neighboring node by a link. Specify the node positions ![]() :

:

Specify the nodes that are anchored to the wall:

Specify the node at which the load is applied:

Specify the nodes that are connected to each other through a link and compute the length of each link:

Visualize the unoptimized truss:

The links are circular bars. Each link must be formed from one out of a group of bars of cross sections ![]() . Let

. Let ![]() be a decision vector for each link

be a decision vector for each link ![]() , such that if

, such that if ![]() , then bar

, then bar ![]() is selected. For link

is selected. For link ![]() , the area is then defined as

, the area is then defined as ![]() . The objective is to minimize the weight:

. The objective is to minimize the weight:

The bar selection constraint is:

Only one bar must be selected for each link. The binary constraint is:

Find the indices of the nodes that are not anchored:

The stiffness matrix of the system is given by ![]() , where

, where ![]() is the total number of nodes,

is the total number of nodes, ![]() is the number of nodes that are anchored and

is the number of nodes that are anchored and ![]() is the set of all the links. The vector

is the set of all the links. The vector ![]() if

if ![]() ;

; ![]() if

if ![]() , else 0:

, else 0:

Let ![]() be the force vector for the entire system. At each of the nodes that is not anchored, the force is

be the force vector for the entire system. At each of the nodes that is not anchored, the force is ![]() . The node where force is applied is

. The node where force is applied is ![]() :

:

Let ![]() be the maximum allowable deflection at any node. Let

be the maximum allowable deflection at any node. Let ![]() be displacement of node

be displacement of node ![]() ; then

; then ![]() is satisfied if

is satisfied if ![]() , where

, where ![]() is the stiffness matrix associated with link

is the stiffness matrix associated with link ![]() :

:

Find the optimal structure of the truss:

Extract the links that are part of the optimal truss:

Visualize the optimal truss. The links that are part of the optimal truss are colored-coded based on the rod area being used:

Properties & Relations (8)

SemidefiniteOptimization gives the global minimum of the objective function:

Plot the objective function with the minimum value over the feasible region:

Minimize gives global exact results for semidefinite problems:

Compare to SemidefiniteOptimization:

NMinimize can be used to get inexact results using global methods:

FindMinimum can be used to obtain inexact results using local methods:

ConicOptimization is more general than SemidefiniteOptimization:

SecondOrderConeOptimization is a special case of SemidefiniteOptimization:

QuadraticOptimization is a special case of SemidefiniteOptimization:

Use auxiliary variable ![]() and minimize

and minimize ![]() with additional constraint

with additional constraint ![]() :

:

LinearOptimization is a special case of SemidefiniteOptimization:

Possible Issues (5)

The constraints at the optimal point are satisfied up to some tolerance:

With default options, the constraint violation tolerance is 10-8:

The minimum value of an empty set or infeasible problem is defined to be ![]() :

:

The minimizer is Indeterminate:

The minimum value for an unbounded set or unbounded problem is ![]() :

:

The minimizer is Indeterminate:

Dual related solution properties for mixed-integer problems may not be available:

Although the constraint matrices can be Hermitian, the variables need to be real:

Vectors[n] automatically evaluates to Vectors[n,Complexes]:

For problems with no complex numbers in the specification, the vector variable v ∈Vectors[n] is considered real valued; otherwise, you need to explicitly give the domain as Vectors[n,Reals]:

Tech Notes

Related Guides

Text

Wolfram Research (2019), SemidefiniteOptimization, Wolfram Language function, https://reference.wolfram.com/language/ref/SemidefiniteOptimization.html (updated 2020).

CMS

Wolfram Language. 2019. "SemidefiniteOptimization." Wolfram Language & System Documentation Center. Wolfram Research. Last Modified 2020. https://reference.wolfram.com/language/ref/SemidefiniteOptimization.html.

APA

Wolfram Language. (2019). SemidefiniteOptimization. Wolfram Language & System Documentation Center. Retrieved from https://reference.wolfram.com/language/ref/SemidefiniteOptimization.html

BibTeX

@misc{reference.wolfram_2025_semidefiniteoptimization, author="Wolfram Research", title="{SemidefiniteOptimization}", year="2020", howpublished="\url{https://reference.wolfram.com/language/ref/SemidefiniteOptimization.html}", note=[Accessed: 28-April-2026]}

BibLaTeX

@online{reference.wolfram_2025_semidefiniteoptimization, organization={Wolfram Research}, title={SemidefiniteOptimization}, year={2020}, url={https://reference.wolfram.com/language/ref/SemidefiniteOptimization.html}, note=[Accessed: 28-April-2026]}